To consider for operational use within large-scale linkage systems, accuracy must be sufficiently high. The resultant accuracy or ‘quality’ of these techniques has often been overlooked. Much research has focussed on the security aspect of the Bloom filters, such as cryptographic analyses of encoding methods, modifications, and hashing variations.

As a result, research around privacy-preserving record linkage (PPRL) methods has become a pressing area of inquiry, with much focus on the use of Bloom filters. With growing demand for linked data, it has been critical for record linkage centres to implement methods which protect privacy, yet maximise the benefits that can be derived from data assets. In recent years, record linkage centres have adopted many different models and linkage methods to ensure the protection of individual privacy as part of their operational processes. Probabilistic linkages using Bloom filters benefit significantly from the use of similarity comparisons, with partial weight curves producing the best results, even when not optimised for that particular dataset. The use of Bloom filter similarity comparisons for probabilistic record linkage can produce linkage quality results which are comparable to Jaro-Winkler string similarities with unencrypted linkages. The Sørensen-Dice coefficient and Jaccard similarity produced the most consistent results across a spectrum of synthetic and real-world datasets. Field level partial weight curves for a specific dataset produced the best quality results.

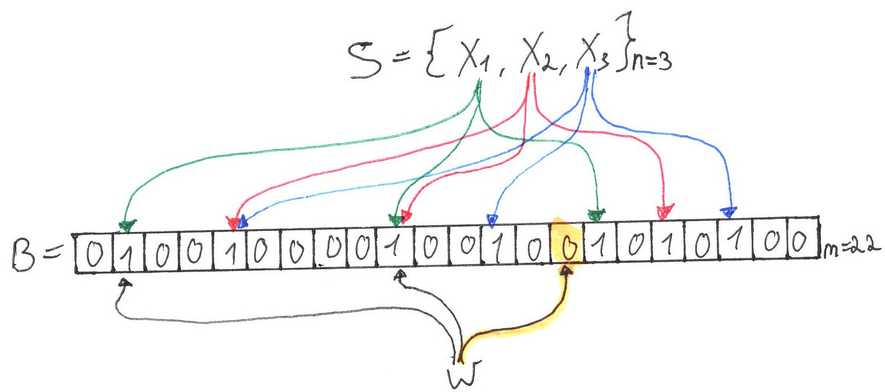

Linkages using approximate comparisons produced significantly better quality results than those using exact comparisons only. This was to compare the resulting quality of the approximate comparison techniques with linkages using simple cut-off similarity values and only exact matching. Deduplication linkages were run on each dataset using these partial weight curves. Using synthetic datasets with introduced errors to simulate datasets with a range of data quality and a large real-world administrative health dataset, the research estimated partial weight curves for converting similarity scores (for each approximate comparison method) to partial weights at both field and dataset level. In this study, we evaluate the effectiveness of three approximate comparison methods for Bloom filters within the context of the Fellegi-Sunter model of recording linkage: Sørensen–Dice coefficient, Jaccard similarity and Hamming distance. With few applications of Bloom filters within a probabilistic framework, there is limited information on whether approximate matches between Bloom filtered fields can improve linkage quality. A popular technique using Bloom filters with cryptographic analyses, modifications, and hashing variations to optimise privacy has been the focus of much research in this area. Bloom filters are not supported on nested columns.The need for increased privacy protection in data linkage has driven the development of privacy-preserving record linkage (PPRL) techniques. Databricks supports the following data source filters: and, or, in, equals, and equalsnullsafe. Nulls are not added to the Bloom filter, so any null related filter requires reading the data file. For example, the index for data file dbfs:/db1/ would be named dbfs:/db1/_delta_index/.index.v1.parquet.īloom filters support columns with the following (input) data types: byte, short, int, long, float, double, date, timestamp, and string. Indexes are stored in the _delta_index subdirectory relative to the data file and use the same name as the data file with the suffix index.v1.parquet. For example, an FPP of 10% requires 5 bits per element.Ī Bloom filter index is an uncompressed Parquet file that contains a single row. The lower the FPP, the higher the number of used bits per element and the more accurate it will be, at the cost of more disk space and slower downloads. The size of a Bloom filter depends on the number elements in the set for which the Bloom filter has been created and the required FPP. Databricks always reads the data file if an index does not exist or if a Bloom filter is not defined for a queried column. Before reading a file Databricks checks the index file and the file is read only if the index indicates that the file might match a data filter. The Bloom filter operates by either stating that data is definitively not in the file, or that it is probably in the file, with a defined false positive probability (FPP).ĭatabricks supports file level Bloom filters each data file can have a single Bloom filter index file associated with it. Isolation levels and write conflicts on Databricks.Skew join optimization using skew hints.Query semi-structured data in Databricks.Optimize performance with caching on Databricks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed